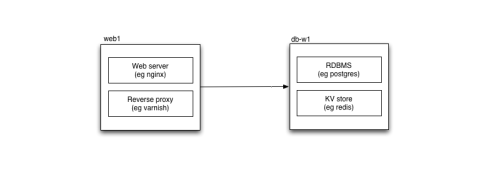

As soon as you scale beyond one host — in a data centre or in the cloud — you start needing a way to resolve services. Where previously every service — your database, your key-value store — was local to your application, now some will be on another host, and your software needs to find it.

Probably the most common form of resolution currently is the Domain Name System, or DNS. (DNS is used as part of Active Directory, which you’ll probably use if you run a Windows-based data centre.) Using DNS has a number of advantages:

- wide support; every operating system you’re likely to use will have DNS support out of the box

- distributed, fault-tolerant, and secure

- you’re already using it (for mail routing, web routing and so forth)

Enter the cloud

From a certain standpoint, using cloud hosting such as Amazon EC2 makes no difference to this picture. In the cloud it’s more common to tear down and replace instances (hosts), but the shift between different available instances can be managed using configuration systems such as puppet or chef to update DNS easily enough. There is, however, a better way.

Let’s take a step back for a moment and ask: what are we trying to do?

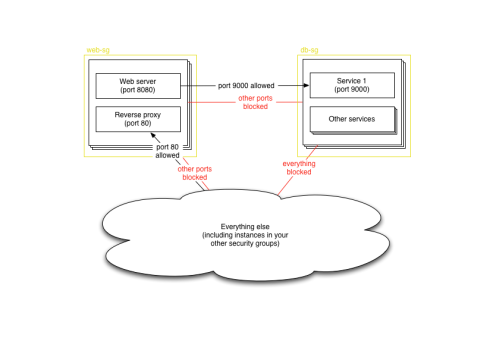

What we want is a way to resolve from function to host. DNS allows us to do this by naming functions within a zone, but in the cloud there’s actually an existing mechanism that closely models what we want already: security groups. (Security groups seem to be only available with Amazon EC2 at the moment; it’s possible that you can do similar things using metadata keys with Rackspace, although you’ll have to do more work to tie it into your explicit iptables configuration.)

Security groups are a way of controlling firewall rules between classes of instances in the cloud. So it’s not uncommon to have a security group for web access (port 80 open to the world), or database access (your database port open only to those other security groups, such as your web servers, that need it). Although they’re called security groups, the most logical way of using them is often as functional groups of instances.

Since you already need to define instances in terms of their security groups, why not resolve using them as well? The Amazon APIs make it easy for instances to find out which security groups they are in, as well as for other instances to look up which instances are in a particular group. Hosts can even be in multiple groups, allowing them to perform multiple functions at once.

This is how elasticsearch performs cluster resolution for EC2: you give it Amazon IAM credentials that can look up instances by security group, and a list of security groups, and elasticsearch will look for other instances in those security groups, bringing them into the cluster. Spin up a new elasticsearch instance in the right security group, and it joins the cluster automatically.

cloud:

aws:

access_key: AKIAJX4SKZVMGKUYZELA

secret_key: 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

index:

translog:

flush_threshold: 10000

discovery:

type: ec2

ec2:

groups:

- airavata

gateway:

recover_after_nodes: 1

recover_after_time: 2m

expected_nodes: 2

bootstrap:

mlockall: 1

We do the same thing at Artfinder for our web servers to resolve database instances and so on.

AWS_REGION, AWS_PLACEMENT = get_aws_region_and_placement()

TPHON_HOSTS = find_active_instances("tphon", AWS_REGION)

DUMBO_HOSTS = find_active_instances("dumbo", AWS_REGION)

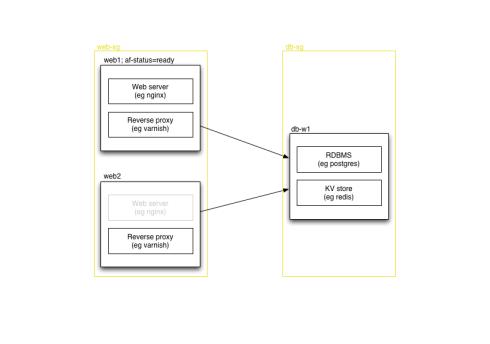

In some cases, you need to choose only one machine to use out of several in the security group, perhaps because you have several different instances with the same data available, or perhaps because you’re bringing up a new instance which isn’t yet ready. You could test connect to each one to find a genuine running service, or you could use EC2′s facility for tagging instances. We use a tag of af-status=ready on anything that’s in service, and we can check that when finding database instances, or when figuring out which machines should be attached as web servers to our load balancers.

We do this with a very simple filter on top of the security group-based instance resolution code:

from awslib.ec2 import instances_from_security_group

def find_active_instances(security_group, AWS_REGION='us-east-1'):

def active(instance):

if instance.state!='running':

return False

elif instance.tags.get('af-status', None) != 'ready':

return False

else:

return True

return filter(

active,

instances_from_security_group(

security_group,

region=AWS_REGION,

aws_access_key="AKIAJX4SKZVMGKUYZELA",

aws_secret_key="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",

)

)

Tags have a considerable advantage over security groups in that they can be altered after you spin up an instance; you could in fact use only tags to resolve instances if you chose, although since you still need to provide firewall rules at the security group level, a mix seems best to us.

Tags allow us to have a security group for all our backend data storage services — to start with everything’s always on one host — and then migrate services away smoothly, simply by moving tags around. (Obviously there is some complexity to do with replication as we migrate services, or some downtime is required in some cases.) We don’t have to deploy code, and we don’t have to wait for DNS changes to propagate (even with a low TTL there’s a short period of time to wait) — we just flip a switch, either in Amazon’s web console or via the EC2 API, both of which are already distributed and can be accessed from anywhere given the right credentials. Most crucially, the web console is already written for us — we have a small amount of code to take advantage of security group and tagging for resolution, and that’s it.

In actual fact, our running instances don’t re-resolve continually, since that’s a performance hit; but we can reload the code on command from fabric, from anywhere, as well, and they’ll resolve the new setup as they reload. Flipping a service from one machine to another takes a few seconds, on top of whatever work has to be done to migrate data.

Configuration management

In general if you make the same configuration change twice you’re doing it wrong. Configuration management allows you to specify how all your hosts are set up — packages installed, services running, everything — so you can apply it automatically to new hosts, or upgrade existing ones as configuration changes. Two of the most common configuration management systems today are puppet and chef — we use puppet. The crucial part when running several different types of host is to figure out which configuration to apply for a given host. One way is to use hostnames, or MAC addresses, but this doesn’t work well in the cloud where you can’t predict anything about an instance before you build it. However you can control what security group it’s in; we have a custom fact that looks up the security groups an instance is in and matches that to a configuration.

require 'open-uri'

groups = open("http://169.254.169.254/2008-02-01/meta-data/security-groups").read

groups = groups.split("\n")

groups = groups.select {|g| g != 'ssh-access' }

if groups.size > 0

sec_group = groups.first

Facter.add("ec2_security_group") {

setcode { sec_group }

}

puts "SECURITY GROUP #{sec_group}"

end

We combine this with the Ubuntu EC2 AMIs’ user-data init script system to automatically puppetize new instances on startup. For instance, to bring up a new search server we just do:

fab new_airavata:puppet_version=2011-10-12T10.42.01

A few minutes later, our search cluster has a new member, and can start redistributing data within it. (Although it uses fabric, it’s actually just python using boto, an EC2 library. We use fabric to perform management tasks on existing instances, so it makes sense to use it to fire up new ones as well.)

It’s a bit more work to bring up a new webserver (currently we like to test them rather than putting them automatically in rotation at the load balancer), and database servers tend to be the hardest of all because they require hostnames to be set in textual configuration for things like replication configuration — although this can be managed with puppet, and we’ll get there eventually.

A slightly crazy idea

The big advantage of DNS over security group resolution is the ubiquity of support for DNS lookups. Since this goes right down into using DNS hostnames for resolution within configuration files (such as for replication setup, as mentioned above), it might be helpful to run a DNS server that exposes security groups and tags as a series of nested DNS zones:

; Origin is: ec2.example.com

1.redis.tphon IN A 10.0.0.1

2.redis.tphon IN A 10.0.0.2

3.redis.tphon IN A 10.0.0.3

1.postgres.tphon IN A 10.0.0.3

2.postgres.tphon IN A 10.0.0.4

1.readonly.postgres.tphon IN A 10.0.0.5

2.readonly.postgres.tphon IN A 10.0.0.6

We actually already do something similar for generating munin configuration, and it could be done entirely by a script regenerating a zonefile periodically; if anyone writes this, it’d be interesting to play with.

In summary

Although DNS is the most obvious approach to resolution on the internet, there are other options, and in Amazon’s cloud infrastructure it makes sense to consider using security groups and tags, since you are likely to need to set them up anyway. It takes very little code, and is already being adopted by some clustering software.